Note added January 3, 2022: In section VI of this post we noted the need to convince users to switch to social media platforms that avoid the click-bait advertising business model at the root of many social media failings. We thank one of our readers for alerting us to the existence of such an alternative platform, of which we were previously unaware. CounterSocial, created and supported by the hacktivist known as The Jester, advertises itself as “Next-Gen Social Media” with “NO trolls. NO abuse. NO ads. NO fake news. NO foreign influence ops.” You can read on their website about the algorithms it uses to support these claims. CounterSocial (or CoSo) is funded in part from Pro account subscriptions (most subscriptions are free) and private donations, but mostly to date by The Jester him/herself. It was created in 2017 and to date there are more than 30,000 subscribers. It has uniformly excellent user reviews, with one user claiming “This is everything social media should be.” Our reader put it this way: “Not only works, but the financial model further doesn’t rely on the selling and manipulation of user data. CounterSocial is a community of diverse and fair-minded folks who enjoy sharing art, humor, life lessons, science, music, friendly support, etc. which is all achieved with civility.” We invite our readers to check CounterSocial out. We certainly will ourselves.

July 21, 2021

I. introduction

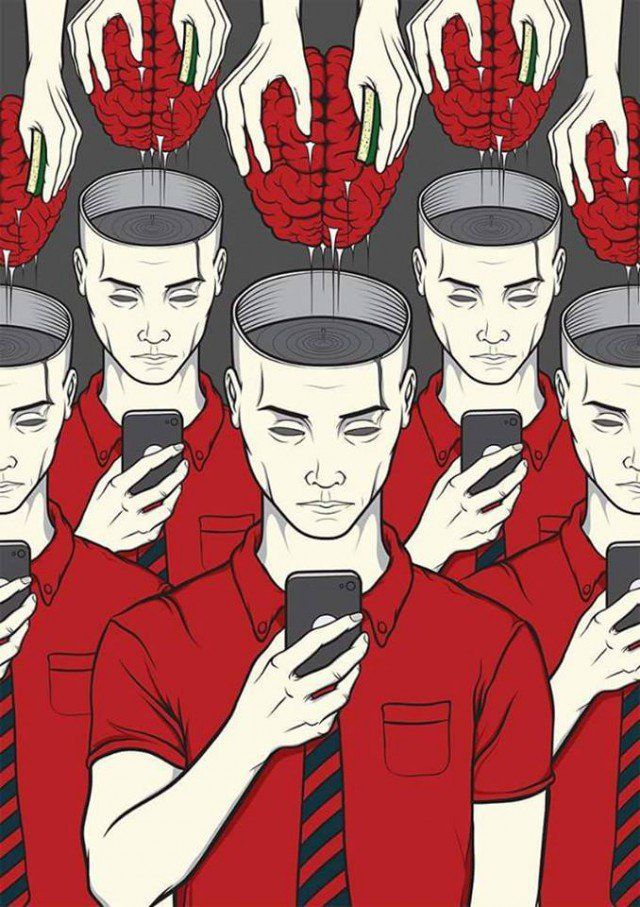

In our earlier post on Trump’s Cult of Personality, we noted in passing that “social media turn out to provide the most efficient technique ever invented for brainwashing.” In the present post we elaborate on this comment, explaining how both the designers of social media platforms and would-be brainwashers exploit the malleability of the human brain to enhance their efforts. We make widespread reference to Kathleen Taylor’s book Brainwashing: The Science of Thought Control, in which she lays out the ways in which our minds, perceptions, ideas, beliefs and behaviors are creatures of neuronal habit. Those who seek to monopolize your attention or manipulate your beliefs aim to exert their influence over the “training” of neuronal pathways – the same training that allows infants to learn quickly, adults to establish and focus on their interests, and occasionally to become addicted to substances or behaviors that jeopardize their health.

We describe how those neuronal pathways and the cognitive webs they form operate in conjunction with various parts of the human brain. We show how the business models adopted by most social media platforms are designed to be addictive and to incentivize confirmation bias and tribalism. We discuss how those incentives facilitate the brainwasher’s techniques, in mass influence campaigns, to reprogram their target’s neuronal habits, by strengthening certain cognitive webs at the expense of others and bypassing parts of the brain that weigh new inputs against prior knowledge, evidence and experience. At the end of this post we suggest possible ways to reform social media in order to diminish their use in the viral spread of misinformation. But those reforms are useful only in conjunction with individual efforts, which we have addressed elsewhere on this site, to improve the critical thinking and bullshit detection that allow one to distinguish real education from external attempts at mind control.

II. Dopamine hits and social media silos

The internet was launched with the promise to facilitate “crowd wisdom.” Perhaps it was inevitable that it would come to be dominated instead by the spread of “viral nonsense.” Indeed, some aspects of such a transformation were foreseen long before the internet even existed. In a piece on the Fast Company website, Renee Diresta points out that: “In his 1962 book, The Image: A Guide to Pseudo-Events in America, former Librarian of Congress Daniel J. Boorstin describes a world where our ability to technologically shape reality is so sophisticated, it overcomes reality itself. ‘We risk being the first people in history,’ he writes, ‘to have been able to make their illusions so vivid, so persuasive, so ‘realistic’ that they can live in them.’”

With regard to the political use of misinformation spread, Ed Herman and Noam Chomsky were prescient in their 1988 book Manufacturing Consent. They noted how privately owned media in the hands of a few super-wealthy entrepreneurs could “function as a propaganda system that deceived its readers quite as efficiently as a heavy-handed government censor.” They were writing about newspapers and identified a set of filters through which information would have to pass before being released to a media audience. As summarized by Justin Podur: “Concentrated media ownership helped ensure that media reflected the will of its wealthy, corporate owners; reliance on official sources forced journalists and editors to make compromises with the powerful to ensure continued access; shared ideological premises, including the hatred of official enemies, biased coverage toward the support of war; the advertising business model filtered out information that advertisers didn’t like; and an organized ‘flak’ machine punished journalists who stepped out of line, threatening their careers.”

We have seen all of these filters play out in the modern world of newspaper publishing (e.g., the Murdoch empire, which recently ordered a New York Post reporter to publish a completely fake story about the Biden administration handing out copies of Kamala Harris’ children’s book to asylum-seekers at the Mexican border) and TV media (Fox News, Newsmax, One America News). But it is especially true, and more technologically supported, in social media that are controlled by a very concentrated set of big tech titans at Facebook, Google and Twitter. Podur summarizes: “The tech giants are advertising companies at their heart, and so all of the problems that came with the legacy media being driven by advertisers remain in the new environment. Two years ago a report out of Columbia University described the new business model of media, ‘the platform press,’ in which technology platforms are the publishers of note, and these platforms ‘incentivize the spread of low-quality content over high-quality material.’”

In the case of social media, the viral spread of this low-quality content does not reflect the political biases of the tech giants so much as the adoption of a business model whose consequences were only partially foreseen by its developers. The social networking software developed at Harvard University by Mark Zuckerberg and several fellow students formed the basis of the Facebook company launch in February 2004. But for its first several years of existence, it was not clear how Facebook would be turned into a profitable business. In October 2008, Zuckerberg said “I don’t think social networks can be monetized in the same way that search did … In three years from now we have to figure out what the optimum model is. But that is not our primary focus today.”

It became the primary focus after Facebook hired Sheryl Sandberg as Chief Operating Officer in March 2008. Brainstorming sessions Sandberg convened at Facebook led to the adoption of a business model in which advertising revenues would lead to profitability beginning in September 2009. According to its 2017 Annual Report, Facebook now defines itself this way: “Facebook enables people to connect, share, discover, and communicate with each other on mobile devices and personal computers. There are a number of different ways to engage with people on Facebook, the most important of which is News Feed which displays an algorithmically ranked series of stories and advertisements individualized for each person.” Nearly all of Facebook’s revenue comes from marketers who pay a small fee per click their advertisements receive on pages of Facebook or its subsidiaries Messenger, Instagram and WhatsApp.

Facebook users find it attractive that they don’t have to pay themselves to use the services. But in return, Facebook is singularly focused on monopolizing its users’ time, in order to increase advertiser clicks and its own profitability. Sean Parker, one of Facebook’s founders, admits that the company’s goal has been to “consume as much of your time and conscious attention as possible,” by creating “a social-validation feedback loop . . . exactly the kind of thing that a hacker like myself would come up with, because you’re exploiting a vulnerability in human psychology.” This goal is accomplished by giving users “a little dopamine hit every once in a while, because someone liked or commented on a photo or a post or whatever. And that’s going to get you to contribute more content and that’s going to get you…more likes and comments.” Increasingly, those dopamine hits are delivered by the News Feed because the user was fed an attention-grabbing low-quality piece of information that confirmed his or her biases.

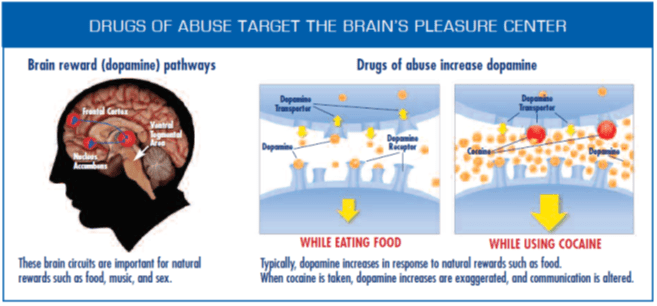

Dopamine is one of the seven small-molecule neurotransmitter chemical messengers that do most of the work in carrying signals within the neurological system across the tiny gaps, called synapses, between sending neurons (axons) and their targeted receiver neurons (dendrites). Figure 1 illustrates schematically how the neurotransmitters bridge the synaptic gaps. When a neurotransmitter molecule mates with a selective receptor molecule on the membrane of the dendrite, as a key does with a lock, this mating opens ion channels that allow more neurotransmitters to enter the dendrite. The effect of the neurotransmitters entering the cell of the receiving neuron through the opened ion channel is often to modify the receiving cell by affecting gene expression within it. Depending on the outcome of the transmitted signal, the changes to gene expression can lead, for example, to an increase or decrease in the number of receptors set up on the dendrite membranes, thereby increasing or decreasing the neuron’s receptivity to future signals involving the same neurotransmitters.

As Kathleen Taylor explains: “This ability of cells to alter the strength of the synapses between them is the secret of the brain’s power to learn from experience…By linking synaptic strengths to how active neurons are, the brain sculpts its cognitive landscape according to the stimuli it receives. Just as water flowing over the ground carves out a channel, and thus over time flows more and more easily, so signals flow between neurons, strengthening connections between them, and making it easier for future signals to flow.” The goal of social media companies and of brainwashers is to control the stimuli the brain receives in order to modify its cognitive landscape.

Dopamine activity, in particular, is central to the brain’s learning about which stimuli deliver pleasurable rewards reliably and which do not. In response to stimuli that carry the promise of such a reward, dopamine is released from sending neurons very rapidly – within 70-100 milliseconds, faster than one can “feel” an emotion – to alert the brain to “good stuff coming.” As summarized on a Harvard website: “Unexpected rewards increase the activity of dopamine neurons, acting as positive feedback signals for the brain regions associated with the preceding behavior. As learning takes place, the timing of dopamine activity will shift from reward receipt until it occurs upon the cue alone, with the expected reward having no additional effect. And should the expected reward not be received, dopamine activity drops, sending a negative feedback signal to the relevant parts of the brain, weakening the positive association with that particular cue.”

Not all stimuli that deliver pleasurable rewards have positive effects on a person’s well-being. Addiction to drugs of abuse, for example, is triggered by flooding the brain’s circuits with dopamine (see Fig. 2). Facebook and other social media platforms are designed to induce addiction among users, but without the benefit of the dopamine amplification that abusive drugs produce. As psychologist Adam Alter puts it in his book Irresistible about technology addiction: “The companies that are producing these products, the very large tech companies in particular, are producing them with the intent to hook. They’re doing their very best to ensure not that our wellbeing is preserved, but that we spend as much time on their products and on their programs and apps as possible. That’s their key goal: it’s not to make a product that people enjoy and therefore becomes profitable, but rather to make a product that people can’t stop using and therefore becomes profitable.”

In order to induce addiction, the social media platforms develop and exploit opaque algorithms whose goal is to gather as much information as possible about each user’s lifestyle, biases, likes and interests, and then to deliver frequent, reliable, targeted doses of what that user wants to see, in the form of selected posts by other users, news articles and advertisements. As Renee Diresta explains, these algorithms “work to boost conspiracy theories, move users to more extreme content and positions, confirm the biases of the searcher, and incentivize the outrageous and offensive…once people join a single conspiracy-minded group, they are algorithmically routed to a plethora of others… Rather than pulling a user out of the rabbit hole, the recommendation engine pushes them further in. We are long past merely partisan filter bubbles and well into the realm of siloed communities that experience their own reality and operate with their own facts.” Those silos, as we will see, are a brainwasher’s dream.

The social media companies do not bear the burden of producing the viral content themselves. Here they benefit from the proliferation of zero-cost electronic publishing. Diresta again: “Self-publishing has eliminated all the checks and balances of reputable media―fact-checkers, editors, distribution partners… Social platforms–in their effort to keep users continually engaged (and targeted with relevant ads)–are designed to surface what’s popular and trending, whether it’s true or not. Since nearly half of web-using adults now get their news from Facebook in any given week, what counts as ‘truth’ on our social platforms matters. When nonsense stories gain traction, they’re extremely difficult to correct. And stories jump from platform to platform, reaching new audiences and ‘going viral’ in ways and at speeds that were previously impossible…the Internet doesn’t just reflect reality anymore; it shapes it…The problem is that social-web activity is notorious for an asymmetry of passion. On many issues, the most active social media voices are the conspiracist fringe.”

The viral spread of misinformation does not have to be propagated by humans. Since much of click-bait content can be automated by bots, much of the Internet is now fake; as Max Read put it in a New York Magazine article: “fake people with fake cookies and fake social-media accounts, fake-moving their fake cursors, fake-clicking on fake websites.”

The founders of Facebook knowingly developed algorithms that exploited this “vulnerability in human psychology,” but they may not have foreseen all the consequences at the start. Many of them do now, as evidenced by their extreme caution in using, or allowing their children to use, their own platforms. They are following a rule for drug pushers and dealers popularized by The Notorious BIG: “Never get high on your own supply.” At a conference in 2017 Sean Parker called himself “something of a conscientious objector” to social media. Shortly thereafter, former Facebook Vice President for user growth Chamath Palihapitiya told a conference audience: “The short-term, dopamine-driven feedback loops that we have created are destroying how society works. No civil discourse, no cooperation; misinformation, mistruth. This is not about Russian ads. This is a global problem. It is eroding the core foundations of how people behave by and between each other. I can control my decision, which is that I don’t use that shit. I can control my kids’ decisions, which is that they’re not allowed to use that shit.“

In response to Palihapitiya’s comments, a Facebook company spokeswoman replied: “When Chamath was at Facebook, we were focused on building new social media experiences and growing Facebook around the world.Facebook was a very different company back then … as we have grown, we have realised how our responsibilities have grown, too. We take our role very seriously and we are working hard to improve.” They’re still working at it, as are the corporate leaders at Twitter, Google and other social media platforms. We’ll come back at the end of this post to consider the sort of serious reforms that may be needed to gradually roll back the damage that’s already been done.

III. a brief introduction to the neuroscience of the human brain

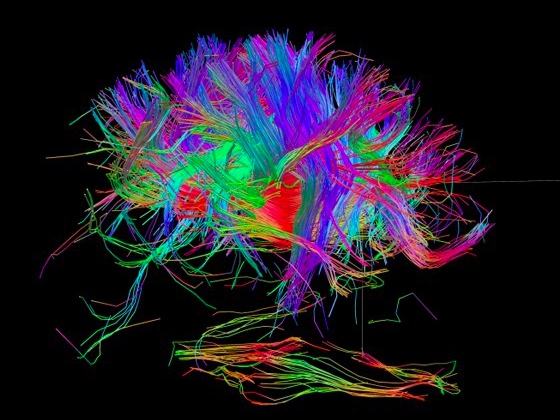

The brain is a communications and control center exchanging signals among its various parts, as well as with the spinal cord and the body’s organs, via billions of neurons. A hint of the complexity of the neural networks is shown in Fig. 3, representing imaging of a subset of neurons connecting to the brain. Other views can be seen on the website humanconnectomeproject.org. Each of these neurons is subject to the kind of training from frequent stimulation discussed above for dopamine pathways.

The human brain has to carry out myriad tasks simultaneously, for example: monitoring and regulating necessary bodily functions and properties such as breathing, eating, sleeping, heart rate, body temperature, circadian rhythm, fluid and hormone balance; registering sensory perceptions from all parts of the body and guiding responses to those perceptions; governing our motor functions, speech, hearing, and understanding of language; registering and responding to emotions; formulating and retrieving thoughts, ideas, beliefs, and memories; supporting creativity and judgments. It accomplishes these tasks via a high degree of compartmentalization, as suggested in Figs. 4 and 5.

The brain’s compartmentalization is by no means exclusive. The processing and response to most stimuli involve multiple parts of the brain’s anatomy and many neurons forming layers of cognitive webs, or cogwebs for short. Different parts of the brain respond with different characteristic timelines and different degrees of complexity of the cogwebs involved, offering both rapid, reflexive responses to external stimuli that are perceived fuzzily at first and slower, more thoughtful responses as cortical areas of the brain become involved in more complex cogwebs to refine the perceptions and the responses. The final stage often involves the brain’s prefrontal cortex (PFC), which evaluates potential responses to external stimuli in the light of expectations based on a person’s past experience and learning.

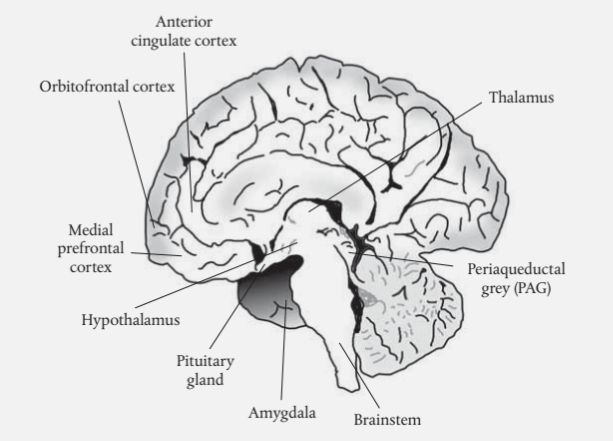

The brain’s processing of emotions provides an illustrative example of the different cogwebs and timelines involved, an example particularly relevant to discussions of brainwashing. The parts of the brain most involved in emotional processing are labeled in Fig. 6. The most immediate pathways involved in emotional response to stimuli that elicit fear or a sense of danger, or other comparably strong, learned emotional responses, proceed along neurons through the subcortical areas of the brain, particularly the thalamus and amygdala, and thence directly to the body via neuronal signals or hormone (e.g., adrenaline or glucocorticoid) release stimulated by the hypothalamus and pituitary gland.

This immediate pathway generates appropriate physiological responses, such as running away, hair standing on end, screaming, increased heart rate, etc. These are evolutionarily developed short-cuts to avoid predators and dangers. As Kathleen Taylor explains: “As with beliefs, the frequently inaccessible nature of our emotions assists our efficient functioning in the world. We do not have time to reflect on every feeling, just as we do not have time to analyse every perception or cognition – short-cuts prevent us from grinding to a perplexed and fatal standstill…They serve as override switches for emergencies, for times when we cannot afford to process all the information fully, when speed of reaction may cross the gap between extinction and survival.”

While these physiological short-cuts are activated, the brain begins the more time-consuming process of using areas in both the sub-cortex and the cortex, in increasingly complex cogwebs, to make sense of the emotions excited and of their appropriateness to the external stimuli. The thalamus sends signals generated by external stimuli not only to the brain stem for immediate distribution to organs, but also to areas in the cortex to initiate the slower processes of interpretation and possible modulation of the initial responses.

Brain imaging studies have shown, for example, that the amygdala is involved in both the short-cut and interpretation responses: “The amygdala receives information about stimuli from the thalamus and the cortex, and sends outputs to the hypothalamus and the periaqueductal grey (PAG). The hypothalamus in turn triggers the pituitary gland, changing hormone levels, while the PAG sends signals to internal body organs such as the gut and blood vessels.” The amygdala also plays an important role in learning how to respond to various stimuli: “…the amygdala learns the emotional meaning of stimuli or retrieves it when the stimuli are familiar…Damage to the amygdala seems to prevent monkeys [and people] from associating objects with emotions. Their vision is normal, but they do not seem able to grasp the emotional significance of what they have recognized.” Such damage (or, in the case of teenagers, incomplete development) of the amygdala leads, for example, to fearlessness in the face of stimuli and situations that should evoke a fear response.

As for the other brain areas included in Fig. 6: “…the medial prefrontal cortex (mPFC) forms associations between actions and their results; the anterior cingulate cortex (ACC) is involved in motivation and conflicting desires; the orbitofrontal cortex (OFC) represents stimuli in terms of their value as punishments or rewards…” Damage to any one of these areas leads to a variety of psychological problems. For example, damage to the OFC makes affected humans “oblivious to social and emotional cues and some exhibit sociopathic behavior.” It also leads to an inability to delay gratification (as seen in children before the OFC is fully developed).

The complex cogwebs involved in linking all these brain parts leads to a relatively long-lasting arc of emotional interpretation. As Taylor points out: “because emotional changes can take longer than thoughts to ebb and flow, the link between a thought and its value can be blurred as that same emotional value becomes associated with other active cogwebs.” As we will see, brainwashers seek to exploit this association by manipulating a victim’s emotions and then connecting those emotions with thoughts, ideas and beliefs the brainwasher intends to implant in the victim’s brain.

Ideas and beliefs depend on the formation of associated cogwebs and their strengthening via repeated activation. Some cogwebs are stronger than others, and this affects how the brain deals with new incoming information that appears to contradict existing beliefs. Taylor again: “If the match between the new input and the current brain structure is poor, there will be little information flow through available cogwebs. Either the cogwebs will adjust, or new cogwebs will form to carry away the surplus, or the input signals will be modified (by adjusting subcortical filters, for example) until they are a better fit for the brain’s expectations…Weaker cogwebs tend to change in response to challenging input; as discussed earlier, they are subservient to reality. Stronger cogwebs tend to lead to more input change – and may lead to the formation of new cogwebs to explain away the new information. Here reality is subservient to expectation.”

The brain’s ultimate arbiter in deciding how to respond to new inputs is the prefrontal cortex (PFC), which evaluates responses in the light of expectations based on past experience and learning. The PFC is thus the center of the “stop-and-think” responses of the brain. It is thereby a source of resistance to brainwashing attempts, which seek rather to exploit the rapid responses and instinctive self-protective actions fueled by the simplest, sub-cortical cogwebs.

As Kathleen Taylor explains: “The prefrontal lobe only completes its development in late adolescence, and, like muscles, works better the more it is used…Age stacks up layers of stored knowledge, giving the PFC more history inputs to play with…the neurotransmitter dopamine, which plays a critical role in the PFC, varies significantly depending on which form of a certain gene an individual has…A healthy PFC, well oiled by education and wide experience, allows us to think ahead, to resist temptation (sometimes), and to see past the immediate gratification to the long-term consequences. These are all capabilities inimical to would-be manipulators. Ideally, a brainwashing technique would bypass or usurp the PFC’s role, channelling neural activity to cogwebs implementing the desired beliefs while weakening or erasing the victim’s former convictions.”

Furthermore: “Low educational achievement, dogmatism, stress and other factors which affect prefrontal function encourage simplistic, black-and-white thinking. If you have neglected your neurons, failed to stimulate your synapses, obstinately resisted new experiences, or hammered your prefrontal cortex with drugs (including alcohol), lack of sleep, rollercoaster emotions, or chronic stress, you may well be susceptible to the totalist charms of the next charismatic you meet.”

A particular feature of brain response that a would-be brainwasher must manage is the emotion of reactance that arises when an individual perceives a violation of his or her sense of personal freedom. Anyone who has raised a child through the “terrible two’s” is aware of the testing of boundaries that characterizes that stage of development. Much of childhood is devoted to observing the reactions of others to the child’s own behavior, and to learning that those reactions are predictable and that the child can adjust its own behavior in accord with predictions of the behavior’s effects. Those learned elements of predictability and changeability develop feelings of comfort and pride in being able to control one’s behavior and those feelings contribute to one’s sense of personal freedom.

Cogwebs that go through the PFC get involved in judging whether current external reality is consistent with those experience- and society-influenced predictions. An error signal arising from a disconnect between reality and expectations leads to reactance, i.e., to emotions of fear and anger, and sometimes to a willingness to fight against losing control. Brainwashers must minimize their victims’ reactance against their own efforts to implant beliefs and ideas. But they also often introduce illusory perceived threats from outside groups in order to enhance the victim’s reactance against those outside groups. A recent example of this deflection “trick” was Donald Trump’s demagogic incitement of the Jan. 6, 2021 Capitol riot, based on his manipulation of supporters to feel that their freedom was severely threatened not by him, but by the imminent certification of a purportedly rigged election. He artificially enhanced the reactance emotion among his devotees, stimulating them to commit violent and illegal acts on his behalf, while he could claim a complete lack of personal responsibility for their actions.

IV. how brainwashing works

Brainwashing is the use of psychological techniques to reprogram a victim’s mind, replacing established ideas, values, attitudes and beliefs with ones implanted by those seeking mind control. Generally, the goal of brainwashers is to carry out long-lasting reprogramming on a significant number of targets without each victim being aware of the implantation, so that each thinks of the implanted concepts as of his/her own devising. After surveying a number of historical incidents of brainwashing, Kathleen Taylor summarizes the techniques applied by the acronym ICURE: “The aim is to isolate victims from their previous environment; control what they perceive, think and do; increase uncertainty about previous beliefs; instill new beliefs by repetition; and employ positive and negative emotions to weaken former beliefs and strengthen new ones.”

Figure 7 is the outdated image of brainwashing that perhaps readers are most familiar with. It is a still photo from the 1962 film The Manchurian Candidate, in which a Chinese Communist uses hypnotism to assert mind control over a small group of isolated American prisoners captured during the Korean War. One of the prisoners, played by Laurence Harvey, is programmed to become an assassin under the trigger control of his own creepy mother (Angela Lansbury), who seeks to be the power behind the throne of a husband modeled on Joseph McCarthy.

More generally, sophisticated brainwashers use techniques to induce strong visceral reactions in victims, reactions dominated by rapid sub-cortical, PFC-bypassing neural pathways, such as fear, anger or revulsion. Blunt brainwashing attempts may induce such reactions by applying force or extreme stress or fatigue, but stealthier attempts can elicit the same responses by persistent, repetitive lying and pressure to conform within an isolated group. The influencers then seek to couple the lingering emotional state from those prompt visceral reactions to one or more central ideas – either implanted or pre-existing within the target – that are impervious to external stimuli or reality. Taylor refers to such concepts as “ethereal ideas” supported by cogwebs that have few, if any, direct connections with inputs external to the body, while their internal connections are subject to powerful signals akin to those associated with emotions. Examples of ethereal ideas are religious faith, deep-seated prejudices, self-identification as a victim of “elites,” Donald Trump’s belief that he is incapable of losing, and all “unfalsifiable” concepts, as often appear among conspiracy theorists, cult members, and pseudoscientists.

As Taylor summarizes: “Linking a strong emotion to an ethereal idea provides in effect a false alarm. The manipulated brain reacts as if to an emergency, not stopping to think, simply choosing the most obvious course of action…overriding all contrary ideas, ignoring or suppressing any evidence which does not fit, distorting reality to match the contours of cogwebs massively strengthened by the [manipulated] energies flowing through them.” And the imprecise nature of ethereal ideas makes it straightforward to associate other groups or words with them. As one ongoing example, QAnon influencers evoke fear and disgust among their “disciples” by convincing them they are victims of elites who engage in pedophilia and cannibalism, and they can then associate the victimhood ethereal idea with oppressors including Democrats, Jews, academics, mainstream media, blacks, immigrants, reptilian aliens or any other group against whom the influencers seek to enhance their disciples’ reactance.

For many of those QAnon believers, Donald Trump has succeeded in coupling their sense of victimhood to a second implanted ethereal idea, namely, that whatever it is that ails them, “I alone can fix it.” He and QAnon influencers thus paint Trump as the sole savior who can rid the victims of their scourge, thereby amplifying Trump’s insatiable need for flattery into cult-like “mass rituals of adoration.” External facts to the contrary – such as Trump’s continuing failure to reclaim the Presidency as several QAnon-“predicted” deadlines have passed – have very limited power to override these implanted or reinforced beliefs.

The neural pathways to drive the desired forceful actions are greased if the ethereal ideas have been pre-planted via cultural or societal biases, stereotypes or “frames,” as in the anti-Semitism that pervaded German minds even before Nazi domination. Those societal ethereal ideas may be passed from individual to individual, from parents and teachers to children, from governments to citizens, and they are often reinforced by media. And they are especially influential when presented by charismatic leaders or societies in simple, clear language and doctrines: “Simple, clear doctrines, set out with conviction, can impress others and often attract many followers, especially if those followers have no vehement convictions of their own… Simple, well-publicized ideas give the impression of unity, and hence of strength of purpose. Ethereal ideas, such as truth, justice, tolerance and freedom, are particularly useful for enhancing a society’s charisma: their covert ambiguity widens their appeal [as well as their susceptibility to being co-opted] and they can be stated in a few compelling words.”

Susceptibility to brainwashing varies among individuals due to differences in the density and strength of cogwebs and the functionality of the prefrontal cortex to provide powerful stop-and-think resistance. “The more alternative paths there are available for the flow of neural activity from input stimulus to output response, the weaker [hence, less easily manipulable] each individual synapse is likely to be. This is why age, education, creativity, and life experience, all of which enrich the cognitive landscape, tend to protect against influence techniques…active cogitation can grow new synapses, which is why ‘use it or lose it’ applies as much to brains as to muscles…” The best individual defense against brainwashing is to exercise one’s brain in stop-and-think activities, such as critical thinking, skepticism and humor, all of which can challenge claimed authority through argument or emotion.

In contrast, those susceptible to brainwashing that emphasizes ingroup vs. outgroup (“us” vs. “them”) hostility may find that the brainwashing satisfies some need for them: “Unlike most viruses, ethereal ideas may be welcomed by their targets, who may fiercely resist attempts to purge the infection. Many of the more hostile reactions to cults underestimate the degree to which they are actually fulfilling their members’ needs, and thereby attracting genuine, consensual commitment. A person infected with an ethereal idea becomes, in the extreme case, that most awe-inspiring of human deformities, the single-issue fanatic. The dominant cogweb drains energy from competing beliefs, gradually shutting down the person’s horizons. The cognitive landscape warps and its scope narrows. Everything becomes interpreted relative to the dominant idea…” And this single-issue fanaticism can lead to atrocities when it is engaged in the furtherance of a totalitarian concept (see Fig. 8).

This variation in susceptibility among individuals presents a challenge to a would-be brainwasher who seeks to apply stealthy, as opposed to coercive, techniques to avoid activating reactance among the intended targets, but who is nevertheless intent on mass control. “The dangers of being discovered are magnified in a population of varying backgrounds, beliefs and desires, more so if that population has access to alternative sources of information…Ideally a brainwasher (whether State or individual) would prefer the target population isolated. If this is not practicable, it may still be possible to make them feel isolated, for example by playing up the danger from external threats (i.e., defining or reinforcing outgroups).” The latter is the Trump technique, hyping dangers posed by the “others,” but simultaneously discrediting the information sources that disagree with the beliefs he tries to implant and inviting his followers (victims) to “self-isolate” in media echo chambers.

In order to influence masses, one also needs help from supportive media and information sources, and talents to keep those helpers engaged. In describing those talents, Taylor seems in her 2004 book to presage with precision the character of Donald Trump’s presidency: “To change belief on a mass scale, given the size of modern societies, is almost certainly out of the question for an individual without group support. To attract this support, an influence technician will…lace his rhetoric with ethereal ideas [e.g., “witch hunt,” “fake news”], cleverly using language to hook the relevant associations into his victims’ brains, making sure that his doctrines are simple and memorable…Although his aim is to make his victims feel more unhappy, so that they are looking for the ‘help’ he is ready to offer, he will do his best to appear likeable, humorous, and human, suppressing challenges to his point of view by derision rather than force, and emphasizing what he has in common with his audience…He will also be careful to avoid any impression of uncertainty, enhancing his charisma by an appearance of single-minded confidence. In all these ways he will hope to gain publicity for his cause, achieving regular access to the media, getting people talking, persuading respected authorities to refer to his ideas as if they are not only reasonable but entirely taken for granted.”

Now that he is no longer in the White House and has been suspended from posting on Facebook and Twitter, Trump is having much greater difficulty in attracting media attention and support for his increasingly outlandish claims. He appears able to retain some portion of his cult supporters, but unable to grow that group. This development provides evidence of the crucial role social media play in contemporary efforts at brainwashing, a subject we take up in the next section.

V. how social media facilitate brainwashing

The central role social media currently play in brainwashing millions of gullible users to believe patent absurdities is evident in the rapid rise and viral spread in 2020 of a few evidence-free conspiracy theories: the QAnon bonkers tale that the U.S. is run by a cabal of elite Satan worshippers who operate sex-trafficking rings and cannibalize children; Donald Trump’s desperately phony claims that the 2020 presidential election was stolen from him by massive election fraud that he and his allies have systematically failed to unearth; calls labeling the COVID-19 pandemic and COVID vaccines as hoaxes perpetrated by elites in order to control the masses, despite the fact that by now the vast majority of Americans know personally of individuals who were infected, hospitalized or died from the disease.

Recent polling from PRRI (Public Religion Research Institute) reveals that 15% of American adults currently agree with the central QAnon claim that “The government, media, and financial worlds in the U.S. are controlled by a group of Satan-worshipping pedophiles who run a global child sex trafficking operation.” The same poll found that 29% agree with the statement “The 2020 election was stolen from Donald Trump.” And 25% still believe that “The U.S. government is using the COVID-19 vaccine to microchip the population,” according to a May 2021 poll by YouGovAmerica. None of these conspiracy theories has had the slightest backing from external reality; none of their “prophecies” have come true. Yet the believers, as is characteristic of the brainwashed, go to great pains to distort external reality and to explain away failed predictions until external inputs are made to conform with their implanted ethereal ideas. And all of these conspiracies are woven around the common implanted ethereal idea that the believers are victims of untrustworthy elites.

The pandemic itself may have contributed to the viral spread of misinformation, since many more person-hours than usual were spent online and burrowing down conspiratorial rabbit holes while large segments of the U.S. and world populations were sheltering at home. But even in the absence of a pandemic the design features of our current social media platforms, as outlined in Section II, facilitate brainwashing. To see this, consider again the ICURE acronym for brainwashing techniques introduced in the preceding section.

I stands for the aim of isolating victims from their previous environment, in order to avoid exposing victims to ideas and arguments that might raise doubts about the implantation. When physical isolation is required, it is best achieved for relatively small groups. But as we explained in Section II, the advertiser-click business model of the social media giants feeds on reinforcing confirmation bias by feeding each individual user what the algorithms determine they most want to see. This leads to willing, online self-isolation of masses, not just small groups, where millions of users interact only with users who share their beliefs, no matter how divorced from reality those beliefs may be. The algorithms allow different “tribes” to exist in alternative realities, enhancing in-group vs. out-group identification and distrust of all information sources outside the in-group, thereby increasing uncertainty (U) about previous beliefs.

Ethereal ideas about victimhood and Donald Trump as “savior” have been implanted by exciting strong emotions (E) of fear and anger toward out-group subjugators, for example, by claiming without evidence that they are pedophiles and cannibals, or vicious criminals conspiring to destroy our way of life. Those negative emotions are then reinforced by positive emotions triggered by the dopamine hits users get by attracting positive attention for generating or transmitting misinformation that supports the viral nonsense. In this way, propagation of brainwashed ideas and beliefs is carried out by masses; it no longer requires continual active participation by those who initially launched the misinformation. The dopamine hits support social media addiction, providing the control (C) hook brainwashers seek.

The allowance for anonymous or pseudonymous posters enhances the role of bots in generating and propagating much of the fake information, thereby greatly enhancing the repetition (R) of false ideas on which brainwashing relies. The prominence of bots furthermore amplifies perceptions of the size of the tribe, so that potential new converts figure “If so many people believe this stuff, there must be something to it.” The absence of editing, fact-checking, or selectivity in allowing posts enhances the role of bots, fringe elements and conspiracy theorists who purport to provide simple explanations and villains to blame for in-group victimization.

As with most cults, the bizarre stories that fuel the viral spread, despite the complete lack of evidence to back them up, are welcomed by most of the believers because they fulfill a need to identify people to blame for their perceived victimhood. In the current era of information overload, most individuals don’t bother trying to assess by themselves whether new information is accurate or part of an influence attempt. Rather, they rely on trusted individuals with allegedly greater expertise. This used to be journalists, scientists and statesmen, but after decades devoted to discrediting “elites,” it is now more likely to be only identified members of the same “tribe” who self-anoint as experts. And it is, of course, in the nature of tribalism that members of each tribe accuse members of opposing tribes of being brainwashed.

The comfort of the echo chamber dulls reactance, so that victims don’t sense their personal freedom being threatened. As Kathleen Taylor points out, “People can be persuaded to give up objective freedoms and hand over control of their lives to others in return for apparent freedoms—in other words, as long as they are aware of the freedoms they are gaining and either contemptuous, or altogether unaware, of the freedoms which they are giving up.” This is the sort of tradeoff that is already being accepted by the most rabid supporters of Donald Trump, who believe that with him in power they gain “freedoms” to refuse mask-wearing or vaccines to combat the spread of a deadly virus. Yet these supporters seem either unaware or unconcerned that by buying into Trump’s Big Lie about a stolen election, they are ceding control of reality, and potentially of our country, to a sociopathic mind controller.

Of course, elements of this tribalization were already present and fueled by more traditional media before Facebook, Twitter, Instagram, and encrypted chat rooms blossomed. But social media have greatly ramped up the size of the infected tribes and the speed with which they form. Trump’s mastery of Twitter, before his account was finally suspended, was essential to expanding the membership and cementing the devotion of his cult.

VI. possible reforms for social media

What are some of the changes we can imagine that could mitigate the deleterious effects of social media platforms in spreading viral nonsense? Faced with the threat of government regulation, some platforms have begun to label selected posts as potential misinformation, or at least unsupported information, and to ban users who violate rules governing posting of dangerous content. This is the path by which Donald Trump had his Facebook and Twitter accounts suspended for perpetuating the Big Lie that the 2020 election was stolen and inciting his followers to violent attempts to subvert the counting of Electoral College votes on January 6, 2021. But as Renee Diresta points out: “The primary concern is that turning companies into arbiters of truth is a slippery slope, particularly where politically rooted conspiracies are concerned.” And this is especially true in light of the concentration of power behind the social media platforms in the hands of a few individuals, raising all the propaganda concerns that Ed Herman and Noam Chomsky raised in Manufacturing Consent.

The more promising approach is to adopt policies and encourage competition that will gradually wean users off their total reliance on social media for the spread of information. It is noteworthy that a number of Big Tech executives have unplugged themselves and their families from their own media platforms. It will not be easy to wean users because the social media algorithms encourage addiction by exploiting humans’ psychological need for social validation. As Justin Podur has pointed out: “In the face of the propaganda system, Chomsky once famously advocated for a course of ‘intellectual self-defense,’ which of necessity would involve working with others to develop an independent mind. Because the new propaganda system uses your social instincts and your social ties against you, ‘intellectual self-defense’ today will require some measures of ‘social self-defense’ as well.” But individual efforts at self-defense can only go so far; many users have no interest presently in defending themselves against misinformation.

Over a generation, we as a society have reduced the harmful health effects of another addictive product, namely tobacco, by a program of warnings and regulations. The neurological mechanism of addiction is pretty much the same whether the addiction is physical, as with cigarettes, or psychological, as with social media. Recall that Sean Parker says the Facebook goal is accomplished by giving users “a little dopamine hit every once in a while, because someone liked or commented on a photo or a post or whatever.” Perhaps we need to start by attaching to Facebook and other social media platforms a Surgeon General’s warning, akin to the 1964 warning that provided a tipping point in reversing the rapid rise in American cigarette smoking (see Fig. 10). In this case the warning might say: “This platform has been found to pose dangers to your brain health. It is funded by advertiser clicks and uses algorithms intended to confirm your biases and to narrow and weaken your cognitive landscape. It thus tends to make you more susceptible to attempts at mind control.”

Such a warning would then encourage at least some users to switch to competing commercial platforms that rely on a business model devoid of advertising, which instead require users to pay directly according to the level of their usage, thereby reducing the incentive toward confirmation bias. Such alternative-model platforms might not be quite as profitable as Facebook, but could still garner substantial earnings without contributing to societal collapse. Even better would be myriad decentralized peer-to-peer social networks maintained by users, with each restricted solely to trusted contacts, eliminating the corporate middlemen focused on bottom-line profits. These would approach Brewster Kahle’s call to “lock the web open.” (By way of full disclosure, my son is presently developing software to support on many computer platforms such decentralized, secure peer-to-peer social networks, intended to overcome many of the challenges that have been noted.) Users of such systems may still seek out posts and opinions supporting their own biases, but they would no longer be steered automatically to dig deeper down the rabbit hole.

Another change worth considering is the banning of all accounts whose users are either anonymous or using an identity that does not check out as factual. Those who use social media platforms to remain in touch with friends and family would be unaffected, but those who intend to spread wild-eyed conspiracies under pseudonyms would be discouraged if their true identities were transparent. And the bots promoting the fake internet would be stripped of their power to deceive.

In any case, the starting point for reform should be widespread dissemination of the dangers of social media, coupled with public service advertising campaigns to encourage users to limit their engagement. The documentary The Social Dilemma, featuring a number of the pioneers of social media platforms who have witnessed the danger firsthand, is an excellent start. It should be required viewing in schools throughout the world. Brainwashing cannot be eliminated; there will always be greedy con-men, dishonest politicians and power-hungry cult leaders who crave exercising mind control. But sensible reforms of the social media giants can at least make it harder for them to control large masses of people.

references:

K. Taylor, Brainwashing: The Science of Thought Control (Oxford University Press, 2004), https://www.amazon.com/Brainwashing-Science-Thought-Kathleen-Taylor/dp/0192804960

https://en.wikipedia.org/wiki/Brainwashing

J. Podur, Mind Control: How Social Media Supercharged the Propaganda System, Salon, Jan. 31, 2019, https://www.salon.com/2019/01/31/mind-control-how-social-media-supercharged-the-propaganda-system_partner/

R. Diresta, Social Network Algorithms are Distorting Reality by Boosting Conspiracy Theories, https://www.fastcompany.com/3059742/social-network-algorithms-are-distorting-reality-by-boosting-conspiracy-theories

A. Hern, ‘Never Get High on Your Own Supply’—Why Social Media Bosses Don’t Use Social Media, The Guardian, Jan. 23, 2018, https://www.theguardian.com/media/2018/jan/23/never-get-high-on-your-own-supply-why-social-media-bosses-dont-use-social-media

https://debunkingdenial.com/test-your-critical-thinking-part-i-questions/

https://debunkingdenial.com/how-to-tune-your-bullshit-detector-part-i/

D.J. Boorstin, The Image: A Guide to Pseudo-Events in America (Vintage Books, 1992), https://www.amazon.com/dp/B0082XLP7O/

E.S. Herman and N. Chomsky, Manufacturing Consent: The Political Economy of the Mass Media (Pantheon Books, 1988), https://www.amazon.com/dp/B003IQ16EW/

M.M. Grynbaum, New York Post Reporter Who Wrote False Kamala Harris Story Resigns, New York Times, April 27, 2021, https://www.nytimes.com/2021/04/27/business/media/new-york-post-kamala-harris.html

E. Bell and T. Owen, The Platform Press: How Silicon Valley Reengineered Journalism, https://www.cjr.org/tow_center_reports/platform-press-how-silicon-valley-reengineered-journalism.php

https://en.wikipedia.org/wiki/History_of_Facebook

P. Kafka, Zuckerberg: Facebook Will Have a Business Plan in Three Years, Business Insider, Oct. 9, 2008, https://www.businessinsider.com/2008/10/zuckerberg-facebook-will-have-a-business-plan-in-three-years

https://en.wikipedia.org/wiki/Sheryl_Sandberg

Facebook 2017 Annual Report, https://www.sec.gov/Archives/edgar/data/1326801/000132680118000009/fb-12312017x10k.htm

https://en.wikipedia.org/wiki/Sean_Parker

https://www.sciencedirect.com/topics/agricultural-and-biological-sciences/neurotransmitters

Dopamine, Perception and Values, https://srconstantin.wordpress.com/2014/07/02/dopamine-perception-and-values/

T. Haynes, Dopamine, Smartphones & You: A Battle for Your Time, https://sitn.hms.harvard.edu/flash/2018/dopamine-smartphones-battle-time/

The Science of Addiction, National Institute on Drug Abuse, https://www.drugabuse.gov/sites/default/files/soa_2014.pdf

A. Alter, Irresistible: The Rise of Addictive Technology and the Business of Keeping Us Hooked (Penguin Books, 2018), https://www.amazon.com/dp/B01HNJIK70/

M. Read, How Much of the Internet is Fake? Turns Out, A Lot of It, Actually, New York Magazine, Dec. 26, 2018, https://nymag.com/intelligencer/2018/12/how-much-of-the-internet-is-fake.html

https://en.wikipedia.org/wiki/Ten_Crack_Commandments

M. Allen, Sean Parker Unloads on Facebook: “God only knows what it’s doing to our children’s brains”, Axios, Nov. 9, 2017, https://www.axios.com/sean-parker-unloads-on-facebook-2508036343.html

J.C. Wong, Former Facebook Executive: Social Media is Ripping Society Apart, The Guardian, Dec. 12, 2017, https://www.theguardian.com/technology/2017/dec/11/facebook-former-executive-ripping-society-apart

http://www.humanconnectomeproject.org/

https://dana.org/article/neuroanatomy-the-basics/

https://teenbraintalk.wordpress.com/limbic-system/

https://en.wikipedia.org/wiki/The_Manchurian_Candidate_(1962_film)

https://en.wikipedia.org/wiki/Joseph_McCarthy

X. Marquez, The Mechanisms of Cult Production, In Kirill Postoutenko, Darin Stephanov (Ed.), Ruler Personality Cults from Empires to Nation-States and Beyond: Symbolic Patterns and Interactional Dynamics, (Routledge, 2020) pp. 21-45, https://openaccess.wgtn.ac.nz/articles/chapter/The_mechanisms_of_cult_production/12971675/1

https://www.facebook.com/BrainwashingIsBadNews/

https://debunkingdenial.com/qanon-an-ominous-conspiracy-theory/

https://debunkingdenial.com/lies-damned-voting-fraud-lies-and-statistics/

https://debunkingdenial.com/ten-false-narratives-about-the-coronavirus-part-i/

A Year of U.S. Public Opinion on the Coronavirus Pandemic, Pew Research Center, March 5, 2021, https://www.pewresearch.org/2021/03/05/a-year-of-u-s-public-opinion-on-the-coronavirus-pandemic/

Understanding QAnon’s Connection to American Politics, Religion, and Media Consumption, https://www.prri.org/research/qanon-conspiracy-american-politics-report/

K. Frankovic, Vaccine Rejectors Believe the Vaccines Were Not Adequately Tested and Can Cause Infertility, https://today.yougov.com/topics/politics/articles-reports/2021/05/14/vaccine-rejectors-believe-vaccines-not-tested

https://smist08.wordpress.com/2020/10/09/brainwashing-by-social-media/

https://debunkingdenial.com/conservative-alternative-science-confronts-and-is-routed-by-reality/

https://debunkingdenial.com/scientific-tipping-points/

Smoking and Health, Surgeon General’s 1964 Report, https://www.scribd.com/document/199073624/Smoking-and-Health

The Health Consequences of Smoking – 50 Years of Progress: A Report of the Surgeon General, https://pubmed.ncbi.nlm.nih.gov/24455788/

B. Kahle, Locking the Web Open: A Call for a Decentralized Web, http://brewster.kahle.org/2015/08/11/locking-the-web-open-a-call-for-a-distributed-web-2/

C. Barabas, N. Narula and E. Zuckerman, Decentralized Social Networks Sound Great. Too Bad They’ll Never Work, Wired, Sept. 8, 2017, https://www.wired.com/story/decentralized-social-networks-sound-great-too-bad-theyll-never-work/

The Social Dilemma, https://www.netflix.com/title/81254224