(January 15, 2018)

This is part III of our four-part series on Young Earth Creationism. In part I, section 1 we summarized the current scientific understanding of the origin and evolution of the Universe. This was contrasted with the pseudo-scientific “timeline” advocated by Young Earth Creationists. In section 2 we reviewed several independent methods that are used to determine the age of the Earth. These methods included radiometric dating, and analysis of fossils embedded in geologic strata.

In part II we summarized a number of methods used to determine the age of the Universe. We also introduced the Big Bang Scenario (BBS), our current scientific model for the origin and evolution of the Universe.

In this part of our series, we will analyze and debunk pseudo-scientific creationist “theories.” The Big Bang Scenario is buttressed by a vast amount of experimental data, all of which support our understanding that the Earth and solar system are about 4.5 billion years old, and that the Universe is 13.8 billion years old. In order to justify a biblical timeline that claims the Universe and the Earth are both roughly 6,000 years old, it is necessary for Young Earth Creationists to assume that the laws of nature or the fundamental constants (or both) have changed drastically since creation, with the aid of supernatural intervention.

In this section we analyze five different creationist “models” that attempt to reconcile scientific data with the notion that the Universe and Earth are both roughly 6,000 years old. All of these hypotheses fail the tests necessary for a valid scientific theory. First, they concentrate on only a tiny fraction of the immense experimental data that supports the BBS. Second, they are forced to cherry-pick certain results and neglect or discredit all contradictory data. Third, these models account for only a small number of phenomena, and neglect other results altogether. Fourth, the “models” are mutually contradictory, often require numerous “miracles” to sweep away the new problems they create, and sometimes reflect deep misunderstandings of accepted scientific theories.

As a general rule, the creationist “theories” involve assuming the answer (the Universe and the Earth must be roughly 6,000 years old). The laws of nature and fundamental constants are arbitrarily changed in order to achieve this result. In attempting to justify these changes, creationists must either disregard experiments that contradict their assumptions, or they must “correct” experimental data to make them agree with the hypotheses. These methods are completely inconsistent with accepted scientific practices.

4. Debunking Creationist Pseudoscience I: “Magical” claims overthrowing laws of nature

The multi-billion-year age determinations for Earth and the Universe are supported by a wide range of geological, astronomical and cosmological observations, interpreted within a self-consistent theoretical framework that accounts for huge quantities of data. In order to justify the biblical timeline of Fig. 1.2 (see Part I), young Earth creationists are forced to assume that either the laws of nature or fundamental constants of nature have changed dramatically throughout their assumed short history. While they rely basically on the invocation of supernatural intervention to account for such dramatic time-dependences, they also feel compelled to produce “scientific evidence” to support their claims. Such “evidence” contradicts a vast library of observations that clearly establish the constancy of both the laws and the relevant constants of nature. There is no consensus creationist cosmology; different creationists use a variety of scientific-sounding supernatural models to demonstrate that the 6,000-year age of the Universe is reasonable. In this section, we summarize arguments debunking several of the “magical” claims most often used in the arguments of the young Earth creationists.

4.1 “Tired Light”

Young Earth creationists accept that the Universe is vast in size because the Bible says that God stretched out the heavens (one can interpret this as their version of cosmic inflation). But many of them reject the notion that the cosmos is currently expanding. Instead, they offer an alternative “theory” for the observed redshifts of distant stars as originating not from recessional velocity (or equivalently, from the ongoing expansion of space and of light wavelengths), but rather from “tired light.” Tired light models argue that the photons from distant sources lose energy (and thereby increase in wavelength) gradually as they travel through the cosmos toward Earth; the greater the distance, the greater the energy loss. This argument is introduced to account for the Hubble correlation between redshift and distance. The mechanism for such energy loss is light interactions with intervening matter, either by scattering or by absorption and re-radiation of light by that matter.

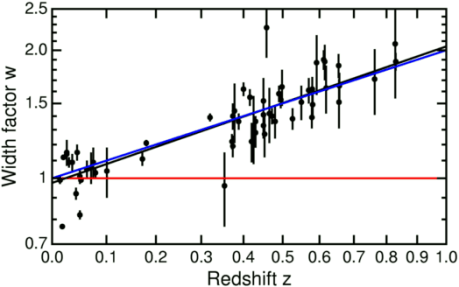

Tired light models are completely ruled out by a number of observations. The scattering or re-radiation of light would alter the direction as well as the energy of photons, thereby blurring the images of distant light sources, in complete contradiction to observations with our most advanced telescopes. In General Relativity it is not only spatial distances but also time intervals that get stretched as the cosmos expands. The time stretch (or time dilation) implies that the well measured light curves of distant Type Ia supernovae (see Fig. 3.1) should appear systematically longer to observers on Earth than the characteristic light curves of nearer supernovae, despite the essentially identical physics of all Type Ia explosions. If light emitted by an exploding star at redshift z=1 decayed over a time period of 20 days as the star exploded, then by the time the light reaches us on Earth that time period should have been dilated by a factor of 1+z=2 to 40 days, just as the light’s wavelength is also increased by the same factor. As shown in Fig. 4.1, time dilation completely consistent with this Big Bang interpretation has been measured for the very distant Type Ia supernovae observed by the Supernova Cosmology Project and the independent High-z Supernova Search teams. The ‘tired light’ hypothesis has no way to account for this time dilation.

Tired light models also cannot account for the observed precise blackbody spectrum of the Cosmic Microwave Background radiation. Young Earth creationists obviously reject the origin of the CMB as being photons last scattered some 13.4 billion years ago, when electrons and nuclei first combined to form electrically neutral atoms. They attribute the CMB instead to red-shifted light from distant, but not old, sources. In Big Bang cosmology, the CMB photons followed a blackbody spectrum when they were last scattered (corresponding to an ambient Universe temperature ~3000 degrees above absolute zero at that time) and when we now observe them on Earth (corresponding to a temperature only 2.7 degrees above absolute zero). This blackbody spectrum is maintained because the wavelength of the photons is stretched while the density of photons is simultaneously reduced by the cosmic expansion of space volumes since their last scattering. In contrast, tired light models allow for increasing the wavelength, but do not incorporate any change in the density of photons since their emission. Such models can then not maintain a blackbody form for the spectrum from emission to observation of the light.

4.2 Changes in Light Speed

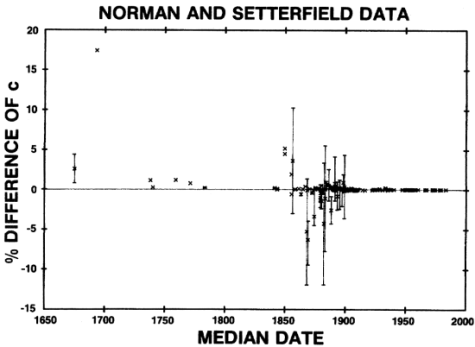

If the speed of light had never changed and the Universe were only 6000 years old, we would be unable to see light from stars more distant than 6000 light years. Since young Earth creationists accept that we have observed galaxies and quasars out to distances of billions of light years, many of them argue that light used to travel much, much faster than it does today (e.g., see https://christiananswers.net/q-aig/aig-c005.html). That point of view seems to have originated with an especially sloppy piece of pseudoscientific research carried out by Barry Setterfield and a collaborator in the 1980’s (T.G. Norman and B. Setterfield, The Atomic Constants, Light and Time). Setterfield purported to demonstrate that the measured speed of light had decreased appreciably over the 300 years or so during which it has been measured by a variety of techniques. The data he included are shown in Fig. 4.2. First of all, Setterfield chose to exclude some specific measurements from his data collection, and he chose to “correct” selected others. For example, he corrected the first measurement in 1675 by Roemer from its published value 24% below the current value for the speed of light to a corrected value about 2% above the current value. But he made no correction at all to the outlier high measurement in 1693 by Cassini. The crude measurements by Roemer and Cassini were made by the same technique, but they differed by more than 50% in their estimates of the time delay of light emitted by the Sun on its passage to Earth.

Setterfield then proceeded to perform a thoroughly unscientific analysis of his somewhat doctored data set. He effectively extrapolated the spotty “corrected” data from 300 years ago backward in time 6000 years by fitting the data with a poorly justified, complicated exponential curve, according to which the speed of light decayed to its present value from a much higher value at the biblical Creation. Furthermore, he assigned the early crude measurements in Fig. 4.2 the same weight in his fit as the far more precise recent measurements made with much more refined techniques. In this way, the 1693 Cassini outlier result was given massively disproportionate weight. Still, the resulting fit, though meaningless, gives an initial value only about 23 times as large as the current speed of light. Even if the speed had been constant at that initial value, it could still only explain the observation of galaxies out to a distance of about 135,000 light years, not the billions of light years our astronomical observations actually span.

Aside from the highly questionable analysis on which this suggestion is based, a changing speed of light would introduce enormous problems in accounting for all sorts of physical results. Many natural phenomena and many other fundamental constants of nature depend on the speed of light, and would have to be similarly modified over the Universe’s short biblical lifetime. For example, the fine structure constant that determines the strength of electromagnetic interactions and atomic energy levels varies inversely with the speed of light. If the fine structure constant had a value many times smaller than its present value when light was first emitted from distant galaxies and quasars, we would not observe the frequency spectra of light that we see from these distant objects. In fact, the observed spectra from the most distant quasars allow for variations in the fine structure constant with time since the creation of early galaxies by no more than several parts per million, many orders of magnitude too small an effect to solve the young Earth creationists’ problem.

According to the theory of relativity, the energy associated with massive objects is given by Einstein’s famous equation E = mc2, where c is the speed of light. If c has decreased dramatically over the past 6000 years, where has all that energy gone? If c were originally much greater, the radioactive decay of nuclei would have occurred much more quickly, so quickly in fact that the heat generated by such decays would have melted the Earth. You cannot arbitrarily change one fundamental constant of nature without upsetting the entire self-consistent apple cart of modern physics!

Some young Earth creationists have suggested an alternative directional dependence of light speed, placing Earth in the central cosmic position suggested by the Bible. They argue that measurements of c determine only the time delay with which a light beam sent out from one position returns to that position upon reflection from a distant mirror. Thus, they argue, one can get the accepted value of light’s average speed if the light travels at only half this speed on the way out from Earth, but at infinite speed on the way back. Then we would be seeing the heavens in real time, since the light from distant galaxies would arrive on Earth instantaneously. There is no science or pseudoscience to back up such a magical dependence on direction, and it is completely inconsistent with measurements on Earth or on Earth satellites. If light and radio waves from Earth traveled to global positioning satellites at only half the speed of light, all our GPS systems would be mis-calibrated and would give us incorrect position determinations.

Still another creationist suggestion is that as God stretched the heavens, he started all the light from distant stars and galaxies out at only 6000 light-years removed from Earth. In that case, any astronomical events we observe that appear to have happened more than 6000 years ago, such as hundreds of observed exploding supernovae at distances greater than 6000 light-years, could not have been real, but rather must be illusions programmed into the firmament by God. There is no attempt to provide even a pseudoscientific justification for this assumption, and the approach leads to a very peculiar theology in which God is viewed as the Creator of a cosmic planetarium simulating pre-Creation events that could have occurred via well-established astrophysical mechanisms.

4.3 Earth at the Center of the Universe and “White Hole” Cosmology

The equations that apply General Relativity to Big Bang cosmology (the so-called Friedmann equations) are derived under a simplifying approximation for the sake of convenience. That approximation is known as the cosmological principle, which assumes that the Universe, at least over the distances we can sense it, is on average homogeneous and isotropic: that is, it looks the same from all viewing points and in all viewing directions. This assumption is reasonably consistent with the uniformity we observe in detailed maps (see Fig. 3.8) of cosmic microwave background radiation, which reveal only very small anisotropies typically on the order of 10 parts per million. In this view, the Universe does not have a well-defined “center” and our solar system and Milky Way galaxy do not occupy a “favored” position within it.

The literal biblical interpretation is more or less exactly the opposite of the cosmological principle. In the biblical view, the Earth is at or near the center of the Universe and God stretched the heavens out from Earth’s central position. Young Earth creationists insist on finding evidence for the Earth’s central position, and that central position is crucial to a relatively new creationist “solution” for the distant starlight problem discussed in the preceding subsection.

Let’s deal first with that new creationist ansatz, called “white hole cosmology,” first presented by young Earth creationist Russell Humphreys in his 1994 book Starlight and Time: Solving the Puzzle of Distant Starlight in a Young Universe. Because Humphreys’ approach sounds high-falutin’ and purports to be based on General Relativity, it has been embraced by many creationists as the preferred solution to the distant starlight problem. But as we will see, it is based on a deeply flawed understanding of gravity, General Relativity and Big Bang cosmology. This model is really just another form of the changing light speed account, it is at odds with a great deal of astronomical observations, and it hardly even qualifies as young Earth creationism!

Black holes are phenomena predicted by General Relativity, where a sufficiently dense, compact mass can distort surrounding space-time to such a great extent that no particles or radiation (including light) can escape from within its so-called “event horizon.” By now, many black holes in the Universe, including a supermassive one at the center of the Milky Way, have been identified astronomically via their gravitational effects on stars outside their event horizon. Most recently, we have experimentally observed the gravitational waves emitted when two mutually orbiting black holes spiral inward toward merger; the 2017 Nobel Prize in Physics was awarded for this observation.

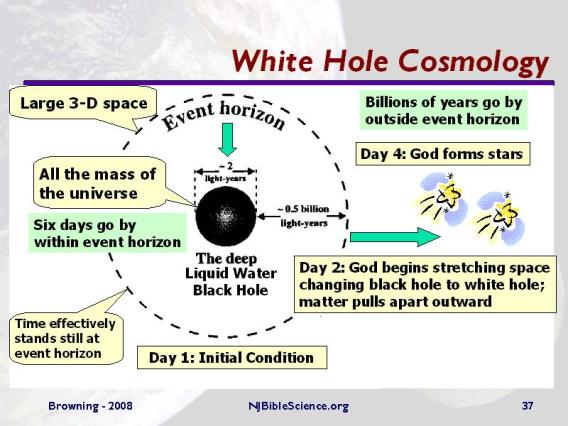

A white hole is the hypothetical inverse of a black hole: it is a region of space-time that cannot be entered from outside but from which matter and light can escape. While General Relativity allows, in principle, for the existence of white holes, there is no known physical mechanism by which such an object could be formed and there is no convincing observational evidence for their existence. But Humphreys’ proposed creationist cosmology assumes that the Universe was created from a white hole, as illustrated in Fig. 4.3.

Humphreys postulates that God converted an initial black hole containing all the watery mass of the Universe into a white hole by extracting matter from inside the event horizon. The Earth remained within the central black hole, but as the mass of the black hole shrunk, so did its event horizon, until the event horizon fell inside the Earth’s radius by the end of the 4th day of creation. The central, deeply flawed tenet of Humphreys’ cosmology is that physical time passed at wildly different rates inside vs. outside the event horizon, so that nearly 14 billion years as measured by distant stars corresponded to only six 24-hour days of Earth time. After those first six days, Earth time and distant star time measurements agreed. As we explain below, the “science” of this “model” is completely inconsistent with the General Relativity on which it is purportedly based (for a more detailed exposition of the model’s deep and extensive flaws, see http://web.archive.org/web/20121107195343/http://trueorigin.org/rh_connpage1.pdf ).

But even theologically, the white hole cosmology account differs from standard young Earth creationism, veering instead toward the metaphorical interpretation of time passage during Creation. It also implies that the speed of light reaching Earth from the distant stars on Earth days 4–6 would have been measured by Earth’s extremely slow clocks as enormously greater than the current value. As a result, Humphreys’ approach is really just another, somewhat extreme, form of unsupported light speed variation. Furthermore, the exposure of Earth to 14 billion years’ worth of starlight during only a few days would have caused extreme heating and melting of the early Earth.

The pseudoscience of Humphreys’ approach relies on the following four central tenets:

- Big Bang cosmology relies on the existence of an unbounded, homogeneous, isotropic Universe;

- gravity acts very differently in a finite, bounded vs. unbounded Universe;

- physical clocks can run very differently in different parts of an expanding bounded Universe;

- time stands still at the event horizon of a black hole.

All four of these are untrue, and reflect a profound misunderstanding of General Relativity and even of classical gravity. Already in the 17th century, Isaac Newton used calculus to prove that, according to his proposed Law of Universal Gravitation, mass distributed symmetrically within a spherical shell exerts no net gravitational force and has no impact on gravitational fields inside that shell. This result is taught to first-year physics students, and it remains true in General Relativity. Thus, the mass in a possibly unbounded homogeneous Universe that falls outside the outer radius of Humphreys’ assumed bounded, homogeneous, spherical Universe would have no effect whatsoever on gravitational fields experienced inside Humphreys’ bound. It is then completely sufficient for Big Bang cosmology to assume that the Universe is approximately homogeneous and isotropic only as far as one can see. Hence, the first two of Humphreys’ assumptions above are incorrect.

Humphreys’ assumptions (3) and (4) reflect his misunderstanding of physical time in General Relativity. The proper, or physical, time measured in any galaxy in an expanding Universe is measured by clocks that are “co-moving” with that galaxy. All such co-moving clocks measure precisely the same time intervals, known as cosmological time; they can all be synchronized, as Humphreys himself admits in Starlight and Time. Thus, the proper time passage since the Universe’s birth must be the same on Earth as in any distant galaxy, and this is true regardless of whether the Universe is bounded or unbounded, and also regardless of the coordinate system one uses to describe the motion of the galaxies. There is still time dilation for all co-moving clocks, as discussed in association with Fig. 4.1: the physical time interval between two events that occurred long ago in a distant galaxy is stretched by the quantity 1+ z by the time light signals associated with those events arrive on Earth, because the ongoing expansion stretches spatial and time intervals as cosmological time proceeds.

Instead of cosmological time, Humphreys uses something called Schwarzchild time to represent time passage on Earth. But this is time as would be measured not by a clock that is fixed on Earth as the Universe expands, but rather by an observer who is moving through space with respect to Earth. Not only that, but in Humphreys’ model the Schwarzchild clock would have to move with respect to Earth at speeds exceeding that of light in the early Universe, so that such an observer is not even physically possible according to the laws of relativity. Under no conditions in General Relativity does Schwarzchild time represent physical time passage on Earth. It is thus closely akin to the “metaphorical” time of creation assumed by those who interpret the Bible less literally than young Earth creationists.

In the same vein, proper time does not effectively “stand still” at the event horizon as claimed by Humphreys. A clock co-moving with the event horizon records cosmological time passage at exactly the same rate as all other co-moving clocks in the expanding Universe. Humphreys invokes his “time stands still” assumption to argue that starlight already emitted during days 2-4 of Earth creation would not have been able to penetrate inside the event horizon to reach Earth until late on day 4, when the event horizon reached Earth. This assumption allows his model to be consistent with the biblical account, but it is inconsistent with General Relativity, which certainly allows light from distant stars to penetrate inward through the event horizon.

Humphreys does attribute the observed redshifts of distant starlight to the expansion of the cosmos, but because much of that expansion occurred during only six Earth days in his model, the expansion history is completely different from the Big Bang cosmology account. In particular, the Hubble constant as measured on Earth would have had to be enormously greater than its present measured value during those first six creation days of Earth time. If this were true, Hubble’s Law would not be valid over large cosmic distances, because the light passage time intervals over which the Hubble constant would have been averaged would be very different for ancient, distant galaxies and quasars than for younger near ones. There is nothing in the extensive catalog of redshift measurements that is even remotely consistent with Humphreys’ model. White hole cosmology is just another pseudoscientific model aimed at providing a sophisticated-sounding justification for the biblical account, without accounting quantitatively for scientific measurements.

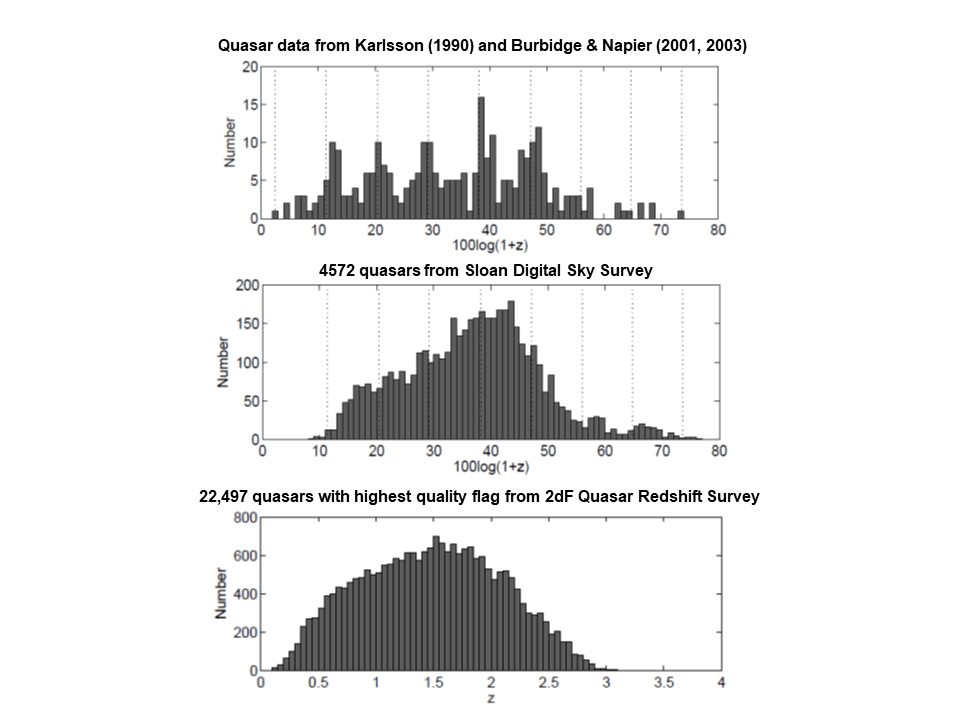

Young Earth creationists are quick to seize upon any astronomical data or cosmological model, no matter how well tested, that appears to suggest that Earth (or at least the Milky Way) does indeed occupy a central location in the Universe, in contradiction to the cosmological principle. In keeping with the standard approach of science deniers, they then systematically ignore or attempt to discredit subsequent larger-sample data or model tests that refute their favored observations or models. A popular topic among creationists is that of so-called “quantized redshifts”. An apparent periodicity in measured redshift values was first reported by Tifft in 1974 (Astrophysical Journal 236, 70 (1980)) and followed up in an analysis of quasar redshifts by Karlsson in 1990 (see K.G. Karlsson, Astronomy and Astrophysics 239, 50 (1990) and G.R. Burbidge and W.M. Napier, Astrophysical Journal 121, 21 (2001)). These analyses were based on samples containing a grand total of about 200 astronomical objects each, and they suggested different periodicities. But young Earth creationists were quick to point out that the analyses suggested that galaxies in the Universe were clustered in spherical shells surrounding the Milky Way at the center, affording Earth its biblical favored position within the Universe.

More recent and complete sky surveys have now yielded redshift measurements for more than a million distant galaxies and over 150,000 distant quasars. Analyses of these vastly larger data samples reveal no signs of the alleged quantization seen in the above earlier studies, which may have been affected by some unrecognized redshift-dependent instrumental bias in sample selection. A sample comparison of Karlsson’s compilation with that of more recent collections of quasar data in Fig. 4.4 shows that any indication of periodicity appears to vanish in the much larger samples. There is, of course, actual galaxy clustering in the Universe, arising in the Big Bang Scenario from slight matter density fluctuations in the infant Universe, but there is no indication from the redshifts that these clusters are centered on the Milky Way.

A more recent argument made by creationists for Earth’s favored biblical position follows from a 2008 suggestion (e.g., K. Enqvist, General Relativity and Gravitation 40, 451 (2008)) by cosmological theorists that the mysterious dark energy invoked in the Lambda-CDM model to account for the apparent ongoing acceleration of cosmic expansion could be avoided if one gave up completely on the cosmological principle. In contrast to Humphreys’ white hole cosmology, where Earth starts out in an ultra-dense black hole, these cosmologists suggested that the Milky Way might exist in a favored position very near the center of a giant void in the Universe, a vastly underdense region of size on the order of hundreds of megaparsecs. They claimed this could then explain the redshifts of distant supernovae without introducing dark energy.

However, explaining the supernova data is not enough. The existence of the accelerating expansion has been confirmed by the extensive CMB and baryon acoustic oscillation measurements referred to in Sec. 3 of this post. As was pointed out very shortly after the original void suggestion (J.P. Zibin et al., Physical Review Letters 101, 251303 (2008)), the giant void model is incapable of accounting quantitatively and simultaneously for all of these data, with CMB temperature and Hubble constant values that are at all consistent with observations. In contrast, the Lambda-CDM model described in Sec. 3.7, including the mysterious but numerically constrained dark energy density, accounts spectacularly well for all of these data, as illustrated in part by Fig. 3.9, with no more adjustable parameters than the void model.

In summary, all of the various cosmological “models” suggested by young Earth creationists fail to survive scientific scrutiny. They are unable to account quantitatively for the now extensive cosmological data; their basic tenets are refuted by scientific measurements and well-tested physics theories. They do not undermine Big Bang cosmology in any way.

4.4 “Accelerated Radioactive Decay”

We have demonstrated that young Earth creationists face an intractable challenge in explaining how light from galaxies billions of light years away from Earth can have reached Earth in only 6000 years. In addition, they face an equally daunting task to explain how the radiometric dating of meteors and rocks that establish the age of the Earth and the solar system can be mistaken by factors approaching one million. As in the case of the fatally flawed model of white hole cosmology, many creationists again rely on a fantastic hypothesis proposed by Russell Humphreys. Humphreys calls his hypothesis “accelerated nuclear decay,” proposing “that episodes with billion-fold speed-ups of nuclear decay occurred in the recent past, such as during the Genesis flood, the Fall of Adam or early Creation week.” The mechanism proposed to account for such acceleration is divine intervention that momentarily alters the strong force in Nature (the one relevant to alpha-particle decay of heavy nuclei) and/or the weak force (relevant to beta decay).

In order to provide some experimental evidence in alleged support of accelerated decay, creationists ignore reliable measurements (see section 2 in part I) of final decay daughter isotopic abundances (such as those of lead isotopes) in rock and the extremely well-tested theory of radioactive decay. They focus instead on the far more complex and environment-, material- and history-dependent processes by which helium atoms diffuse through rock. They attribute all of the helium still present to the emission of alpha particles during the radioactive decay chains of naturally occurring uranium and thorium. Humphreys’ team estimated how much of that radiogenic helium should have remained in microscopic rock crystals, called zircons, radio-dated as 1.5 billion years old, and claimed that the measured helium content strongly exceeded their estimate.

Humphreys and collaborators further claimed that laboratory measurements of helium release rates as a function of temperature for two of those crystals supported their estimate. These laboratory measurements were made some 30 years after the samples were excavated, with the samples under vacuum and in entirely different environmental conditions than they experienced underground prior to excavation. The flawed results were then applied to different types of rock excavated from greater depths than the tested samples. On that basis, they concluded initially that “1.5 billion years’ worth of nuclear decay took place in one or more short episodes between 4,000 and 14,000 years ago.” Subsequently, they narrowed that age estimate to 6,000 ± 2,000 years.

The analysis carried out by Humphreys’ RATE (Radioisotopes and the Age of The Earth) project represents a useful case study in how pseudoscience skews assumptions, cherry-picks and applies poorly justified “corrections” to data, oversimplifies models and misrepresents other science in an attempt to provide after-the-fact justification for a predetermined result.

The highly questionable method of helium diffusion dating relies on three distinct factors:

- measurement of the present concentration (Q) of radiogenic helium-4 atoms in the crystal samples of interest;

- an estimate of the concentration (Q0) of radiogenic helium-4 that would have resulted from the cumulative alpha-decay of heavy radioactive nuclei originally present in those same samples, in the absence of helium diffusion;

- a scientific model of the helium diffusion rate, accounting for the environmental (temperature, pressure, melting, exposure to external helium sources, etc.) history and crystal structure (including defects) of the samples, that can allow extraction of an age estimate from the Q/Q0 helium retention ratios.

In each of these three aspects, the Humphreys study is fatally flawed.

The zircon samples analyzed by the RATE team were extracted over a range of depths from the Fenton Hill site in New Mexico’s Jemez Mountains. The original measurements of helium concentration Q, carried out by heating the samples to release the helium, were reported in 1982 by R.V. Gentry, et al. (Geophysical Research Letters 9, 1129 (1982)). Gentry’s measurements made no attempt to distinguish radiogenic from other helium sources, such as primordial helium (from Big Bang nucleosynthesis) captured in Earth’s crust, or helium released in volcanic eruptions (the Fenton Hill site is quite close to a volcano that last erupted some 130,000 years ago). For example, there was no attempt to measure helium-3 and helium-4 concentrations separately. Such a measurement would be helpful because helium-3 is not emitted in radioactive decay, so that its concentration would help, via the known relative isotopic abundances, to constrain how much of the helium-4 could be of non-radiogenic origin.

Humphreys, et al. then modified all of their Q data from the values reported in Gentry et al., reducing most of the numbers by a factor of 10, but by an even larger factor for some depths. These unsupported changes are attributed, with little explanation, to “likely typographic errors” in Gentry’s tables (for which the original logbooks are no longer available). The “corrections” serve the purpose of avoiding the embarrassing occurrence of Q/Q0 ratios much larger than one.

Humphreys’ estimate of Q0 is even more problematic. Gentry made an initial estimate of Q0 based upon concentration measurements of lead isotopes (with mass numbers 206, 207 and 208) in different zircon samples that had been extracted from nearby sites and analyzed independently a few years before Gentry’s work. The three lead isotopes would have been produced as radioactive decay daughters from different uranium and thorium isotopes, whose decay chains each involve the emission of different numbers of alpha particles. He included rough corrections for the fact that some alpha particles emitted near the surface of the zircons would have escaped during the original radioactive decay processes.

While the RATE team assumed massive typographical errors in Gentry’s Q values, they assumed that Gentry’s Q0 value was similarly mis-reported. With that assumption, they could take Gentry’s Q/Q0 estimates to be accurate, since those values helped them to make their predetermined case for accelerated nuclear decay. They then came up with their own estimate for Q0 by assuming the correctness of Gentry’s largest Q/Q0 ratio (for the shallowest sample) and the RATE team’s own reinterpretation of Gentry’s “corrected” Q value for that sample. They assumed the same Q0 value for all samples, despite the fact that the radiogenic helium-4 accumulation should depend on the crystal size, its variable initial uranium and thorium (and possibly radon) concentrations, and thereby on its environmental history.

The RATE team’s procedure is equivalent to assuming the answer you need to prove your predetermined conclusion; it is not science in any recognizable form. Independent, simple calculations (http://www.asa3.org/ASA/education/origins/helium-gl3.pdf, http://www.talkorigins.org/faqs/helium/zircons.html) of Q0, based on the very same assumptions Gentry reported in his original paper, have been unable to reproduce Humphreys’ value, yielding estimates nearly a factor of three larger, and hence smaller Q/Q0.

Finally, the diffusion model adopted by Humphreys, et al. was greatly oversimplified. As pointed out by Loechelt, a materials engineer with experience in noble gas diffusion issues, the RATE team model suffered from three egregious flaws, plus other more minor errors. First, they interpreted their laboratory diffusion data using a simple analytic formula known to have problems at low temperatures. Yet they applied this model to extrapolate the high-temperature behavior in zircon crystals from their lowest-temperature measurements, which accounted for only 15 parts per million of the total helium release. Second, Humphreys, et al. assumed the same diffusion rate for all helium atoms in the samples, ignoring well known large differences in diffusion rate at low temperatures between the atoms tightly bound to crystal lattice sites and those loosely bound to defect sites. Even though the atoms at defects may represent a small portion of all helium atoms in the sample, that portion dominates the low-temperature diffusion. Thus, extrapolating from the low-temperature measurements would have caused Humphreys’ team to overestimate the higher-temperature diffusion rate by a large factor.

In addition, the RATE team’s model assumed that the zircon crystals at the Fenton Hill site had always been at the temperatures measured when they were extracted. That assumption ignores the geologic evidence that those temperatures were much lower over most of the radiometric age, and were raised substantially by nearby volcanic activity not too long ago, on geologic time scales. Again, this error leads to an overestimate of the helium diffusion rate, which depends strongly on temperature. Other problems with the RATE team model include the use of incorrect sizes of their zircon crystals and improper treatment of the interface between the zircons and the surrounding biotite material, which has much higher diffusion rates. When the RATE team had made enough errors in sample handling, data analysis and modeling to get the answer they wanted, they stopped worrying about what might have been wrong with their work.

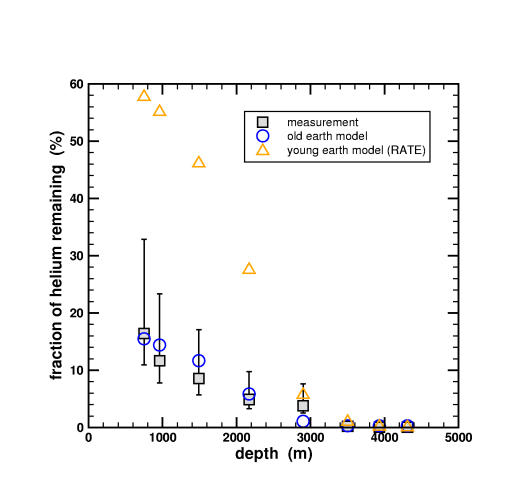

To summarize: when one adopts a more reasonable, and better justified, estimate of Q0 values for the zircon crystals and applies a diffusion model more consistent with the known zircon crystals and known diffusion science, Humphreys’ quoted helium concentrations Q seem completely compatible with the 1.5 billion year age of the rocks determined by the far more reliable conventional radiometric dating techniques. This point is illustrated in Fig. 4.5, which plots the helium retention fraction Q/Q0 as a function of the depth from which the various zircon crystals were extracted.

The red triangles in Fig. 4.5 represent the diffusion model estimates for the young Earth assumption of Humphreys, et al., carefully fiddled to fit their erroneous Q/Q0 estimates. The revised Q0 estimates from Loechelt yield the data represented by square symbols with error bars; if one uses instead the independent estimate of Q0 from Henke, the retention fractions are consistent with Loechelt’s results. The blue circles in Fig. 4.5 represent calculations based on Loechelt’s more sensible helium diffusion model, assuming that the zircon samples are indeed as old (1.5 billion years) as indicated by their radiometric dating. The results in Fig. 4.5 therefore suggest that the helium content in these samples, as reported in papers by Humphreys, et al., is consistent with their radiometric dating. But the real conclusion is that radiometric dating is far more reliable, and less dependent on complex details and assumptions, than helium diffusion dating.

Not surprisingly, Humphreys et al. disagree with the latter statement. While they are perfectly willing to allow for extraordinary supernatural changes in nuclear decay rates, they view helium diffusion physics as sacrosanct and unchanging over time, as revealed in the following quote:

“But diffusion rates are tied straightforwardly to the laws of atomic physics, which are in turn intimately connected to the biochemical processes that sustain life. It is difficult to imagine any such drastic difference in atomic physics that would have allowed life on earth to exist.”

Apparently it is much easier for them to imagine that life on Earth could have survived the fatal levels of radioactivity that would have been released into the atmosphere by enhancing nuclear decay rates by a factor of a billion or so. Or that the very rocks they are studying could have survived melting under the intense heat generated by 1.5 billion years’ worth of radioactive decay compressed into a short period of time. After all, it is the heat from radioactive decay of heavy nuclei at their measured rates that keeps the core of the Earth molten. So a billion-fold increase in these decay rates would melt or boil the entire Earth. Young Earth creationists attempt to explain away these fundamental problems with their unrealistic conclusion by invoking supernatural simultaneous cooling of Earth via the stretching of the heavens, or by shielding of radiation by the waters of the Great Flood.

In the end, then, they are reduced, as in all creationist pseudoscience, to simply assuming some supernatural mechanism in order to overcome all the counter-evidence provided by science. Radiometric dating of Earth rocks and meteorites using multiple isotopes gives consistent predictions in excess of four billion years for the age of the solar system. All of these decay processes would have to be sped up by the same unrealistic factor to allow for a young Earth. There is simply no evidence whatsoever of any natural mechanism to change nuclear decay rates by anything more than tiny percentages, depending on the environment experienced by the decaying nuclei. Despite all the fundamental flaws in the RATE team’s work, many young Earth creationists have gladly spread the gospel of accelerated nuclear decay.

4.5 Flood Geology

As explained in Sec. 2, the geologic timeline of the Earth in Fig. 2.5 is determined from the detailed analysis of sequentially deposited layers in Earth’s rock formations, such as the Grand Canyon, and of the distinct fossils found within them. Young Earth creationists not only must debunk the radiometric dating of fossils used in constructing the geologic timeline, but they also feel compelled to explain how the long sequence of rock strata could have been deposited in a very brief geologic time period. They assume that the time period for this accelerated deposit is the several months of the Great Flood described in the Book of Genesis.

Young Earth creationists rely on the magical impacts of the biblical flood to account (albeit, highly unsuccesfully) for most geologic features on Earth, as well as to debunk evolution of the species and scientific determinations of the age of the Earth. They attempt to justify their belief in flood geology by employing the following circular reasoning:

- the Bible says the Earth is only thousands of years old;

- observed deformations in some sedimentary rock strata (e.g., in the Grand Canyon) could not possibly have developed in hard, dry rock over such a short period of time;

- therefore the deformations must have been produced during a period when the sediments were soft and wet;

- since such deformations are observed all over the Earth, they must have occurred during the global flood;

- furthermore, the fossils embedded within the rocks could not have survived unless the strata were all deposited over a short period of time.

They assume the answer they seek to prove in order to disregard geologic evidence that hard, dry rocks can deform over millions of years under the influence of pressure, heating and tectonic forces, and that fossilization can occur over long periods of time or during short events that lay down individual strata at quite different times.

Young Earth creationists further argue that the distinct fossils found in different rock strata are not indicative of a time sequence, despite the clear evidence from radiometric dating of similar fossils. Rather, they insist that the various species all co-existed, including humans and dinosaurs and single-celled organisms, and the apparent segregation in different rock strata simply indicates how successful the various species would have been in seeking higher ground to avoid the advancing flood waters. In this approach, one must assume a remarkable uniformity of physical attributes among each species, so that no weak specimens appear in strata below their natural level or especially strong or lucky specimens in higher strata. In particular, nearly all fossil species are extinct today; we don’t find weaker specimens of living species in the alleged debris of the Great Flood. The scientific interpretation of a multi-billion-year geologic time sequence revealed in the strata is completely consistent with independent evidence for the gradual evolution of the species over billions of years. We will deal with this issue in a later blog entry on this site.

The young Earth creationists’ complete disregard for the radiometric dating of the fossils introduces yet more serious problems with flood geology. Creationists must rely on the assumption of accelerated radioactive decay during the year of the biblical flood. Furthermore, since the radiometric ages of fossils found in different strata are quite different, the degree of acceleration of radioactive decay in the flood geology scenario would have to be strongly correlated with the physical ability of species to outrun the flood waters. But, as mentioned in Sec. 4.4 above, the extreme heat generated by such accelerated radioactivity would have melted all of the sediment, destroying the alleged layered evidence of the flood. Furthermore, the incredibly intense radioactivity released would have killed all the specimens of land animals Noah was attempting to preserve on the ark. Young Earth creationists thus have to invoke numerous supernatural mechanisms to explain away these problems. Their efforts lead to a theology in which God went to great lengths simply to trick humans into seeing evidence for an old Earth and a geologic layering that occurred over many millions of years.

A worldwide flood would have shaped rock formations globally in similar ways. The expectations from a global flood are inconsistent with a wide array of observed geologic features on Earth, as catalogued for example in Weber 1980. Flood geology was scientifically popular in the 17th and 18th centuries, but such models are totally incapable of providing coherent accounts for the vast amounts of scientific evidence collected across multiple subfields since that time. In the scientific method, models and theories must evolve over time to account for newly acquired experimental evidence. Conversely, flood geology scenarios are required to ignore or explain away all evidence that contradicts that theory.

— To be continued in Part IV —